Once you have installed R and RStudio and once you have initiated the # activate klippy for copy-to-clipboard button Now that we have installed the packages (and the phantomJS headless browser), we canĪctivate them as shown below. If not done yet, please install the phantomJS headless browser. # install klippy for copy-to-clipboard button in code chunks Libraries so you do not need to worry if it takes some time). May take some time (between 1 and 5 minutes to install all of the To install the necessary packages, simply run the following code - it Packages mentioned below, then you can skip ahead ignore this section. Before turning to the code below, please install the packages by Library so that the scripts shown below are executed withoutĮrrors. Tutorials, we need to install certain packages from an R To it, you will find an introduction to and more information how to use For a more in-depth introduction to web crawling in RCrawler package and its functions is, however, also highly To use the RCrawler package ( Khalil and FakirĢ017) which is not introduced here though (inspecting the An alternative approach for web crawling and scraping would be The tutorial byĪndreas Niekler and Gregor Wiedemann is more thorough, goes into moreĭetail than this tutorial, and covers many more very useful text mining Gregor Wiedemann (see Wiedemann and Niekler 2017). Tutorial on web crawling and scraping using R by Andreas Niekler and This tutorial builds heavily on and uses materials from this RStudio installed and you also need to download the bibliographyįile and store it in the same folder where you store the If you want to render the R Notebook on your machine, i.e. knitting theĭocument to html or a pdf, you need to make sure that you have R and and for either case (but probably most usefully with the latter) you can tack on a |sort -u filter to the end to get the list sorted and to drop duplicates.The entire R Notebook for the tutorial can be downloaded here. or something like it - though for some seds you may need to substitute a literal \newline character for each of the last two ns. If it is important that you only match links and from among those top-level domains, you can do: wget -qO- |

utm_medium=hppromo&utm_campaign=auschwitz_q1&utm_content=desktop So the only think you need to do after that is to parse the result of "lynx -dump" using grep to get a cleaner raw version of the same result. No need to try to check for href or other sources for links because "lynx -dump" will by default extract all the clickable links from a given page. I didn't want to see those in the retrieved links. But beware of the fact that nowadays, people add links like src="//blah.tld" for CDN URI of libraries. The result will look similar to the following. Lynx -dump -listonly -nonumbers "some-file.html" If you just want to see the links instead of placing them in a file, then try this instead. Lynx -dump -listonly -nonumbers "some-file.html" > links.txt

lynx -dump -listonly -nonumbers "" > links.txt PS: You can replace the site URL with a path to a file and it will work the same way. I have adjusted a little bit to support https files. I have found a solution here that is IMHO much simpler and potentially faster than what was proposed here. My output is a little different from the other examples as I get redirected to the Australian Google page. I guess you could also give -i to the 2nd grep to capture upper case HREF attributes, OTOH, I'd prefer to ignore such broken HTML. The -i option to the first grep command is to ensure that it will work on both and elements. This code will print all top-level URLs that occur as the href attribute of any elements in each line. Where source.html is the file containing the HTML code to parse. In that case you can use something like this: grep -Eoi ']+>' source.html | From your comments, it looks like you only want the top level domain, not the full URL. In order to only get URLs that are in the href attribute of elements, I find it easiest to do it in multiple stages. You can also add in \d to catch other numeral types.Īs I said in my comment, it's generally not a good idea to parse HTML with Regular Expressions, but you can sometimes get away with it if the HTML you're parsing is well-behaved.

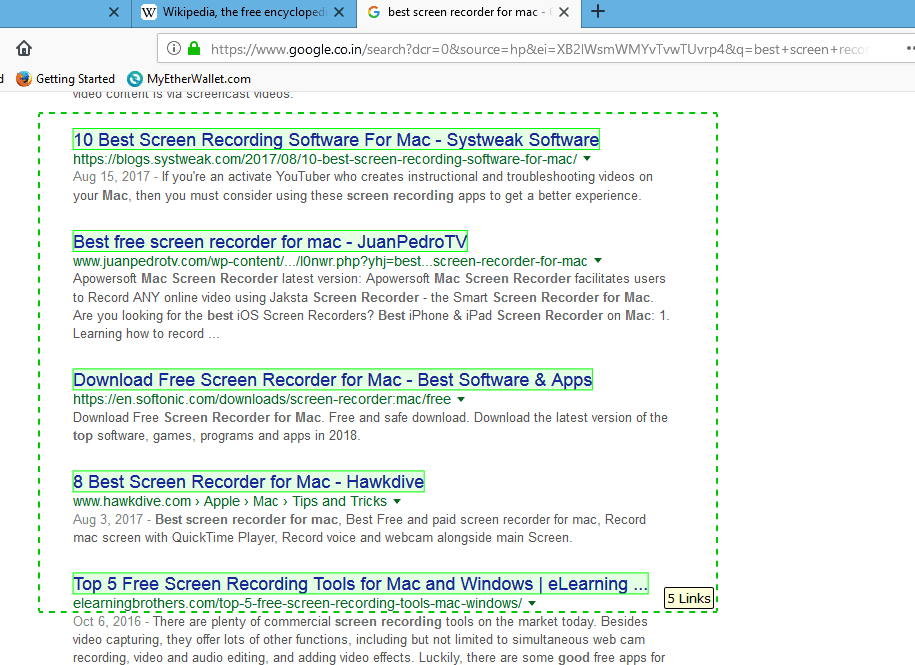

Output: wget -qO- | grep -Eo "(http|https)://*" | sort -u sort -u : will sort & remove any duplicates.grep -o : only outputs what has been grepped.But regex might not be the best way to go as mentioned, but here is an example that I put together: cat urls.html | grep -Eo "(http|https)://*" | sort -u

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed